Seems odd to me that you have 2 different python folder involved(if you use a virtual environment, the system python won't see installed modules on it for example and vice-versa), but I don't know how his installation works at all so that might be normal.

Whisper (OpenAI) - Automatic English Subtitles for Any Film in Any Language - An Intro & Guide to Subtitling JAV

- Thread starter panop857

- Start date

-

Akiba-Online is sponsored by FileJoker.

FileJoker is a required filehost for all new posts and content replies in the Direct Downloads subforums.

Failure to include FileJoker links for Direct Download posts will result in deletion of your posts or worse.

For more information see this thread.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

It's probably me screwing up the installation, lol. I'm really trying to understand Python and the virtual environments like venv and pyenv, but I'm just not great at this stuff.Seems odd to me that you have 2 different python folder involved(if you use a virtual environment, the system python won't see installed modules on it for example and vice-versa), but I don't know how his installation works at all so that might be normal.

Edit: I'm up and running!

Last edited:

Oops, I mixed up the threads. Ijust posted this in the other thread:

Just a quick update, I just did a guide for using WhisperJAV:

WhisperJAV User Guide

The guide so far includes Windows, Mac, and Linux usage. It doesn't include the colab and kaggle releases. Those notebooks are basically implementing the ensemble mode. The difference is that in Kaggle each pass runs in parallel on their dedicated GPU. In colab the two pass are orchestrated sequentially.

WhisperJAV User Guide

The guide so far includes Windows, Mac, and Linux usage. It doesn't include the colab and kaggle releases. Those notebooks are basically implementing the ensemble mode. The difference is that in Kaggle each pass runs in parallel on their dedicated GPU. In colab the two pass are orchestrated sequentially.

EDIT: I fixed it: For Mac users using Brew, you might need to run:

brew install portaudio

pip install pyaudio

Thanks. I added them to the install script and to the docs.

Again, I don't have a Mac to test, so just let me know if anything pops up.

I ran in "Balanced" mode, and it worked beautifully (if slowly, since it was CPU only-about 2 hours for a 2 hour video) The quality of translation is just really, really good-thank you for your hard work!

I'm now trying "Transformers" mode, which supports Metal, as I would like to give you some benchmarks between a MacMini M1 (Geekbench Metal 32K) and a MBP M1Pro (Geekbench Metal 68k)...it initially tried to just translate without Transcribing, but I think setting both the initial Transcription tab and Ensemble tab to Transformers mode has fixed it-running tests on both machines.

I'm now trying "Transformers" mode, which supports Metal, as I would like to give you some benchmarks between a MacMini M1 (Geekbench Metal 32K) and a MBP M1Pro (Geekbench Metal 68k)...it initially tried to just translate without Transcribing, but I think setting both the initial Transcription tab and Ensemble tab to Transformers mode has fixed it-running tests on both machines.

I ran in "Balanced" mode, and it worked beautifully (if slowly, since it was CPU only-about 2 hours for a 2 hour video) The quality of translation is just really, really good-thank you for your hard work!

I'm now trying "Transformers" mode, which supports Metal, as I would like to give you some benchmarks between a MacMini M1 (Geekbench Metal 32K) and a MBP M1Pro (Geekbench Metal 68k)...it initially tried to just translate without Transcribing, but I think setting both the initial Transcription tab and Ensemble tab to Transformers mode has fixed it-running tests on both machines.

Thanks that would be helpful.

Meanwhile, give a try to ensemble mode 2-pass. You will like the results

I'll be sure to give them all a workout!Thanks that would be helpful.

Meanwhile, give a try to ensemble mode 2-pass. You will like the results

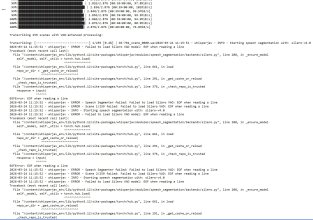

I'm seeing this error in the logs (on both Macs), but it still seems to run-is it not that important?

/Users/Toast/venvs/whisperjav/lib/python3.12/site-packages/requests/__init__.py:113: RequestsDependencyWarning: urllib3 (2.6.3) or chardet (7.0.1)/charset_normalizer (3.4.4) doesn't match a supported version!

I'm seeing this error in the logs (on both Macs), but it still seems to run-is it not that important? /Users/Toast/venvs/whisperjav/lib/python3.12/site-packages/requests/__init__.py:113: RequestsDependencyWarning: urllib3 (2.6.3) or chardet (7.0.1)/charset_normalizer (3.4.4) doesn't match a supported version!

Yes, that is a harmless dependency version warning

Sorry for all the Mac questions!

I am trying the Metal-enabled Transformers mode:

I have used your new guide to check, and my machine is seen as MPS, arm64, and I updated PyTorch to confirm they were default and not CUDA.

is there anything else I can do to force HuggingFace Transformers mode to use MPS?

Edit: I do not believe the CPU mode worked; the .SRT file was 0 bytes:

I am trying the Metal-enabled Transformers mode:

Based on MacOS Activity Monitor, Transformers mode is using only CPU, no GPU cycles.2026-03-06 12:12:59 - whisperjav - INFO - Step 3/5: Transcribing with HF Transformers...

2026-03-06 12:12:59 - whisperjav - INFO - Transcribing full audio file...

2026-03-06 12:13:02 - whisperjav - INFO - Loading HF Transformers ASR pipeline...

2026-03-06 12:13:02 - whisperjav - INFO - Model: kotoba-tech/kotoba-whisper-bilingual-v1.0

2026-03-06 12:13:02 - whisperjav - INFO - Device: cpu

2026-03-06 12:13:02 - whisperjav - INFO - Dtype: torch.float32

2026-03-06 12:13:02 - whisperjav - INFO - Attention: sdpa

2026-03-06 12:13:02 - whisperjav - INFO - Batch: 8

`torch_dtype` is deprecated! Use `dtype` instead!

Device set to use cpu

I have used your new guide to check, and my machine is seen as MPS, arm64, and I updated PyTorch to confirm they were default and not CUDA.

is there anything else I can do to force HuggingFace Transformers mode to use MPS?

Edit: I do not believe the CPU mode worked; the .SRT file was 0 bytes:

2026-03-06 13:31:54 - whisperjav - INFO - TransformersASR transcribing with task='translate' - output should be in English

Whisper did not predict an ending timestamp, which can happen if audio is cut off in the middle of a word. Also make sure WhisperTimeStampLogitsProcessor was used during generation.

2026-03-06 14:22:21 - whisperjav - WARNING - Translation mode was requested but output appears to be in Japanese (14437/17022 chars are Japanese). This may indicate HuggingFace translation is not working as expected.

2026-03-06 14:22:21 - whisperjav - WARNING - Sample output: 皆さん、こんにちは。今日も始まりました。アイヘンリオンのビジルトレーニング、皆さん3分間、しっかりと頑張っていきましょう。今日も頑張っていきましょう。いいですね。ちょっと待って、一緒に一緒に頑張ります...

[DONE] Transcription complete: 1 segments

Last edited:

Based on MacOS Activity Monitor, Transformers mode is using only CPU, no GPU cycles.

I have used your new guide to check, and my machine is seen as MPS, arm64, and I updated PyTorch to confirm they were default and not CUDA.

is there anything else I can do to force HuggingFace Transformers mode to use MPS?

Edit: I do not believe the CPU mode worked; the .SRT file was 0 bytes:

I'll look into it during the weekend. Can you do a run while you have the debug option on --the debug flag is in the adv. options tab. If you could copy all the console putput and send to me, that would be helpful.

Also, Have you tries Qwen3-ASR and anime-whisper? Do you get same issues from those?

Meanwhile, I must say the performance of transfromers so far has been disappointing --so you're not missing much

I will run it tonight (I may be on a different time zone than you). I will also try to create a GitHub account(!) to put these issues/logs, if that is better.I'll look into it during the weekend. Can you do a run while you have the debug option on --the debug flag is in the adv. options tab. If you could copy all the console putput and send to me, that would be helpful.

Also, Have you tries Qwen3-ASR and anime-whisper? Do you get same issues from those?

Meanwhile, I must say the performance of transfromers so far has been disappointing --so you're not missing much

I have tried anime-whisper as part of an ensemble, but...I am running into a similar problem with local LLMs as these users did: https://github.com/meizhong986/WhisperJAV/issues/196

So I have a couple subtitles successfully generated with it and balanced, but in Japanese.

Oh, you addressed it tonight, lol.

I did reduce Batch to 10, but that didn't help.

I will try your other suggestion to lower Scene Threshold as well to 30.

On a related note, is there a reason the Direct To English (Whisper) option is available in the basic Transcription, but is not available for Ensemble or Standalone? It might be useful for testing quickly, as it seems to provide decent results.

Of course, if I can get the local LLM thing to work, maybe that is just a superior solution.

Last edited:

I will run it tonight (I may be on a different time zone than you). I will also try to create a GitHub account(!) to put these issues/logs, if that is better.

I have tried anime-whisper as part of an ensemble, but...I am running into a similar problem with local LLMs as these users did: https://github.com/meizhong986/WhisperJAV/issues/196

So I have a couple subtitles successfully generated with it and balanced, but in Japanese.

Oh, you addressed it tonight, lol.

I did reduce Batch to 10, but that didn't help.

I will try your other suggestion to lower Scene Threshold as well to 30.

On a related note, is there a reason the Direct To English (Whisper) option is available in the basic Transcription, but is not available for Ensemble or Standalone? It might be useful for testing quickly, as it seems to provide decent results.

Of course, if I can get the local LLM thing to work, maybe that is just a superior solution.

I just released a beta for 1.8.7 which should fix the problem you're facing with the local LLM.

Yes, if you used the uninstall script or windows uninstaller.@mei2, May i ask when i uninstall whisperjav, will all of the decencies get removed as well ?

Hi,

May I know the link for the latest notebook version of

WhisperJAV Colab Edition (Expert)

You can find all the notebooks here: WhisperJAV notebooks

Hi,You can find all the notebooks here: WhisperJAV notebooks

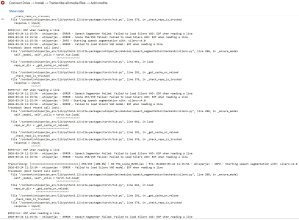

I want to report an error on WhisperJAV Colab Edition v1.8.5 (Expert). Please see error details below. The option I selected for this run are also indicated below.

I hope you can help us resolve the error.

Thank you so much.

Same,

EOFError: EOF when reading a line

2026-03-25 18:33:15 - whisperjav - ERROR - Speech Segmenter failed: Failed to load Silero VAD: EOF when reading a line

2026-03-25 18:33:15 - whisperjav - ERROR - Scene 261/262 failed: Failed to load Silero VAD: EOF when reading a line

So I try:

Pre-download the model manually by running this in an interactive Python session first:

import torch

torch.hub.load(repo_or_dir='snakers4/silero-vad', model='silero_vad', force_reload=False)

Once cached, it won't prompt again, try:

Set trust_repo=True since my code calls torch.hub.load directly:

torch.hub.load('snakers4/silero-vad', 'silero_vad', trust_repo=True)

This suppresses the interactive confirmation prompt.

Check your torch hub cache — the model may be partially downloaded/corrupted:

rm -rf ~/.cache/torch/hub/snakers4_silero-vad_master

I then re-run (interactively first to allow any prompts).

Still not work,therefore I need the help of @mei2 AKA code master god to help me.

-Besh

EOFError: EOF when reading a line

2026-03-25 18:33:15 - whisperjav - ERROR - Speech Segmenter failed: Failed to load Silero VAD: EOF when reading a line

2026-03-25 18:33:15 - whisperjav - ERROR - Scene 261/262 failed: Failed to load Silero VAD: EOF when reading a line

So I try:

Pre-download the model manually by running this in an interactive Python session first:

import torch

torch.hub.load(repo_or_dir='snakers4/silero-vad', model='silero_vad', force_reload=False)

Once cached, it won't prompt again, try:

Set trust_repo=True since my code calls torch.hub.load directly:

torch.hub.load('snakers4/silero-vad', 'silero_vad', trust_repo=True)

This suppresses the interactive confirmation prompt.

Check your torch hub cache — the model may be partially downloaded/corrupted:

rm -rf ~/.cache/torch/hub/snakers4_silero-vad_master

I then re-run (interactively first to allow any prompts).

Still not work,therefore I need the help of @mei2 AKA code master god to help me.

-Besh

Last edited:

I want to report an error on WhisperJAV Colab Edition v1.8.5 (Expert). Please see error details below. The option I selected for this run are also indicated below.

+ @Besh ,

I usualy get to do these during the weekend. It's a bummer when bugs show up so early in the week

I have just pushed a new version of the notebook, as well as a new release.

The error must be gone now. I haven't tested it that vigorously. Please let me know if any issues.

You may see a syntax message that is harmless, I'll sort that out later.

The notebook is still using the feature set of 1.8.5. It hasn't yet wired some of the newer releases' (1.8.10) features: ollama, qwen3-asr, speech-enhanced-vad.

I hope to bring the notebook uptodate soon.

Last edited:

Similar threads

- Replies

- 1

- Views

- 1K

- Replies

- 1

- Views

- 433

- Replies

- 3

- Views

- 2K